You have more visibility into your AWS environment than at any point in cloud computing history. Cost Explorer tracks your spend. CloudWatch monitors your resources. Trusted Advisor flags best-practice violations. Security Hub aggregates findings from half a dozen services. Budgets sends you alerts when thresholds are crossed.

And yet — your team is no closer to actually optimizing anything than they were six months ago. The dashboards are open. The data is right there. But nothing is happening.

That's not a discipline problem. It's a design problem. You're drowning in data and starving for guidance.

The Dashboard Trap

AWS native tools are genuinely useful. They're also deliberately neutral. They show you what's happening in your environment without taking a position on what you should do about it. That's by design — AWS serves millions of customers with wildly different contexts, and opinionated defaults would be wrong more often than they'd be right.

For a team with a dedicated cloud architect or an experienced FinOps engineer, neutral tools are a solid foundation. They provide the raw material, and the expert provides the judgment.

But most small and mid-sized businesses don't have that person. The person responsible for AWS is a developer, an IT generalist, or a sysadmin who inherited the console login when the last person left. They can read the dashboards. They can see the numbers. What they can't do is translate a CloudWatch metric or a Cost Explorer line item into a specific, prioritized action — because that translation requires expertise they were never hired to have.

For these teams, data without opinion isn't insight. It's noise.

When Data Creates More Questions Than Answers

This isn't abstract. Here's what it looks like in practice:

The $200/Month NAT Gateway

Cost Explorer shows a NAT Gateway running at $200/month. Is that normal? High? You're not sure — you've never benchmarked NAT Gateway costs. Should you replace it with VPC endpoints? Maybe, but which services are driving the traffic? Is it S3? ECR? Something else? Cost Explorer shows the total, but not the breakdown by destination. You'd need to dig into VPC Flow Logs, correlate with service endpoints, and estimate whether a Gateway Endpoint (free for S3) or Interface Endpoints ($7.50/month each) would actually save money. That analysis takes hours you don't have, so the $200/month stays.

The 43 Security Hub Findings

Security Hub is telling you that 12 findings are critical and 31 are high. That sounds bad. But which ones are actually exploitable in your environment versus theoretical best-practice violations? Is the "S3 bucket allows public access" finding about your marketing site's asset bucket (intentional) or your application data bucket (a real problem)? What's the remediation for each one? How long will each fix take? Without that context, the findings are anxiety-inducing but not actionable. So you close the tab and come back to it next quarter.

The 8% CPU RDS Instance

CloudWatch shows your production database running at 8% average CPU. That seems like a clear candidate for downsizing. But CPU isn't the whole story — what about memory pressure? Connection count? Query latency at peak? Will the next deploy spike it? And if you do downsize, what instance type should you move to? The metrics tell you something might be wrong, but they don't tell you what to do about it or what's safe. So you leave it alone, because the cost of guessing wrong on a production database is a lot higher than the cost of overpaying.

Every one of these scenarios ends the same way: the data surfaces a question, but the team is left to answer it themselves. Most of the time, they don't.

What Opinionated Guidance Actually Looks Like

Now imagine the same three scenarios with prescriptive recommendations instead of raw data:

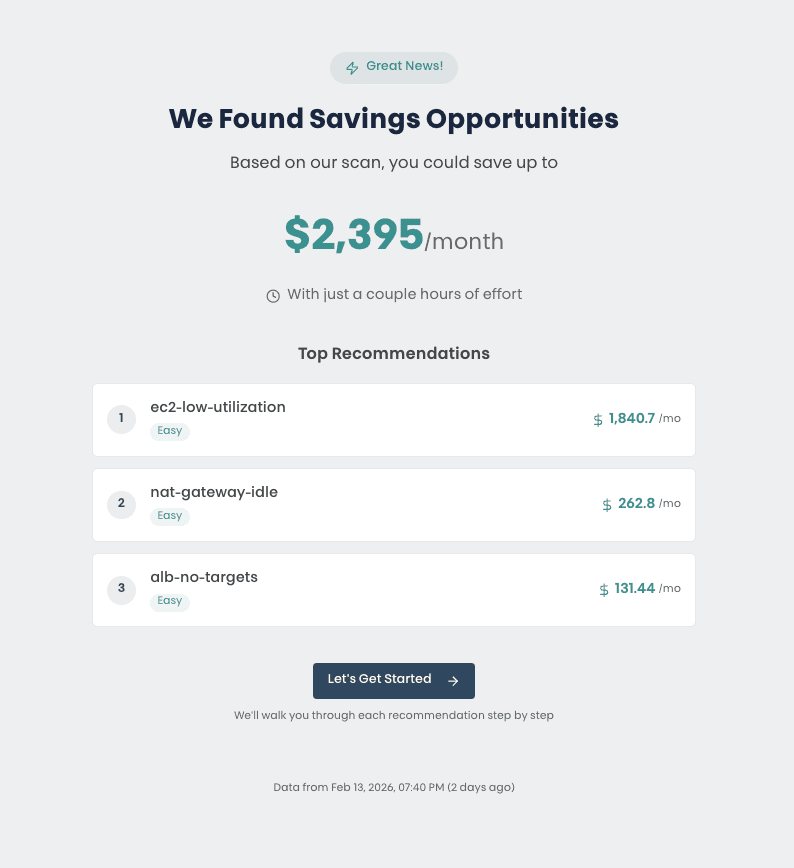

The difference is night and day. The first version leaves you with a research project. The second gives you a decision: yes or no. The analysis is already done. The context is already there. The effort estimate tells you whether it's a ten-minute fix or a change-window project. And a direct link takes you to the specific resource that needs attention.

This is what opinionated guidance means in practice. Not just "here's what we found," but "here's what we recommend, here's why, here's how hard it is, and here's where to start." It's the difference between handing someone a topographic map and handing them a compass pointing north.

When You Need More Than a Platform

Even the best automated recommendations have a boundary. Some findings require context that only exists inside your organization — why a particular architecture was chosen, which workloads have seasonal patterns, what compliance constraints apply. Some remediation steps are straightforward for an experienced cloud engineer but intimidating for a team that's never modified a VPC configuration or resized a production database.

Advisory Support isn't a separate product or an upsell. It's bundled directly into the platform experience because the whole point is that customers shouldn't be handed a list of findings and left to figure it out alone. The platform provides the automated intelligence. Advisory Support provides the human judgment. Together, they deliver the kind of environment-specific guidance that would typically require hiring a cloud consultant or bringing on a dedicated operations engineer — without the $15,000-to-$50,000 price tag that puts traditional consulting out of reach for most small and mid-sized teams.

Key Takeaways

- More data doesn't mean better decisions. AWS gives you incredible visibility, but without prescriptive guidance, that data creates more questions than answers for teams without deep cloud expertise.

- The real gap isn't finding problems — it's knowing what to do next. Every tool can surface issues. The hard part is translating findings into specific, prioritized, effort-rated actions.

- Opinionated beats neutral for teams wearing multiple hats. When you don't have a dedicated cloud architect, you need recommendations that come with context, not just charts that leave interpretation to you.

- Advisory Support closes the last mile. When automated recommendations need a human touch — environment-specific context, implementation walkthroughs, prioritization help — having engineers available at no extra cost turns findings into outcomes.